But there was a key difference. Half of the students were randomly assigned to a fixed sequence of practice problems, progressing from easy to difficult. The other half received a personalized sequence with the AI tutor continually adjusting the difficulty of each problem based on how the student performed and interacted with the chatbot.

The idea is based on what educators call the “zone of proximal development.” When problems are too easy, students get bored. When they are too difficult, students become frustrated. The goal is to keep students in their sweet spot: challenged, but not overwhelmed.

The researchers found that students in the personalized group performed better on a final exam than students in the fixed problem group. The difference was characterized as the equivalent of 6 to 9 months of additional schooling, a striking claim for an after-school online course that lasted only five months. The inventor of the AI tutor, Angel Chung, a doctoral student at the Wharton School, acknowledged that his conversion of statistical units “was not a perfect estimate.” (TO draft document about the experiment was published online in March 2026, but has not yet been published in a peer-reviewed journal).

Still, this is early evidence that small adjustments (in this case, calibrating the difficulty of practice problems for the student) can make a difference.

Chung said ChatGPT answers can seem very personal because they respond directly to a student’s unique questions. But that level of customization is not enough. “Students usually don’t know what they don’t know,” Chung said. “The student does not have the ability to ask the right questions to get the best tutoring.”

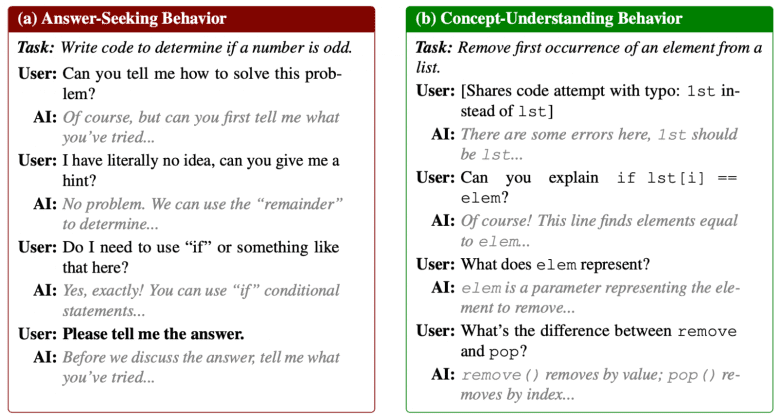

To address this, Chung’s team combined a large language model with a separate machine learning algorithm that analyzes how students interact with the online course platform (how they answer practice questions, how many times they review or edit their coding, and the quality of their conversations with the chatbot) and uses that information to decide which problem to address next.

How different students interact with the chatbot tutor

In other words, personalization is not just about adapting explanations. It’s about adapting your own learning path.

That idea is not new.

Long before generative AI tools like ChatGPT were invented, education researchers developed “intelligent tutoring systems” that attempted to do something similar: estimate what a student knew and give them the next correct problem. These earlier systems couldn’t produce natural conversations, but they could provide instant cues and feedback. Rigorous studies found that well-designed versions helped students learn much more.

The whole of his Achilles tendon was commitment. Many students simply did not want to use them.

Today’s AI tools could help address that problem. Students might feel more interested in a chatbot that converses with them in an almost human-like way.

In the University of Pennsylvania study, students in the personalized group spent more time practicing—about three extra minutes per problem, adding up to about an hour per module in the Python course—compared to half the time (half an hour or less) for comparison students. The researchers believe that these students did better because they were more involved in their practice work.

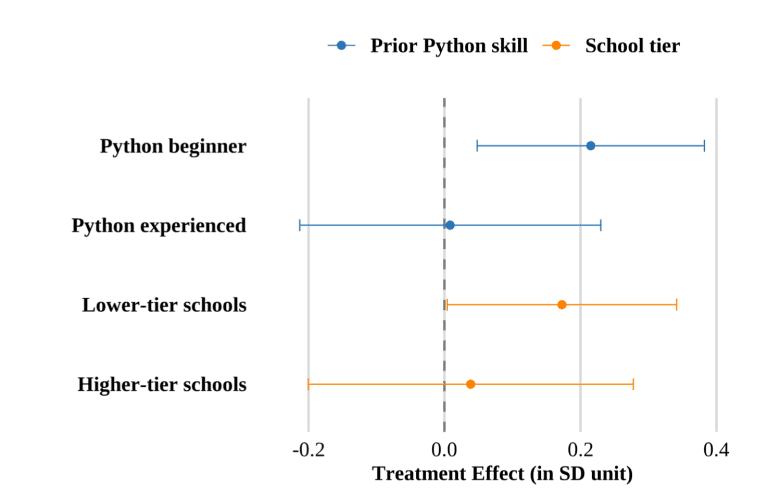

Students’ prior knowledge of a topic affected how personalized sequencing worked. Students who were new to Python gained more than those already experienced in Python, who did equally well with the fixed sequence of practice problems. Students at less elite high schools also seemed to benefit more.

How students’ backgrounds affected results

All of the Taiwanese students in this study volunteered for an optional computer programming course that could strengthen their college applications. Many were highly motivated, had highly educated parents, and many already had prior coding experience.

It’s unclear whether the chatbot would work as well with less motivated students who are behind in school and most in need of extra help.

One possible solution: merge the new and the old.

Ken Koedinger, a professor at Carnegie Mellon University and a pioneer of intelligent tutoring systems, is experimenting with using New AI models to alert remote human guardians that can motivate struggling students who are falling asleep. “We’re having more success,” Koedinger said.

Humans are not obsolete yet.

This story about AI teachers was produced by The Hechinger Reportan independent, nonprofit news organization covering education. Enroll in Test points and others Hechinger Newsletters.